For 150 years we shared a beautiful, collective misunderstanding about what a camera was.

From the first Kodak to the early iPhone, we believed the camera was a device built to freeze time by trapping a fading sunset, capturing a child’s first steps, or documenting a fleeting smile on a flat grid of pixels. It was a tool built entirely for human nostalgia.

That era is over.

If you want to understand the architecture of the next fifty years, you have to let go of the idea that cameras are for taking pictures. To the engineers building the future, a camera is no longer a recording device.

A camera is now sensory organ for artificial intelligence

The technology driving this shift is Computer Vision.

It is the most important algorithm of our lifetime, and it works by doing something incredibly simple, yet profoundly world changing: it translates the physical world into math. To understand Computer Vision, you have to understand the difference between how you see the world, and how a machine sees it.

When you look out a window and see a woman running through the rain to catch a cab, your brain tells you a story. You understand her urgency. You understand the wet pavement. A computer does not understand stories. It only understands numbers. For decades, computers were blind. They sat in dark rooms, waiting for humans to type numbers into a keyboard so they could process them.

Computer Vision is the algorithm that finally gave the machine eyes.

When you feed that same video of the running woman into a modern Computer Vision network, it strips away the human narrative and extracts pure geometry. In milliseconds, the algorithm maps her skeleton in 3D space. It calculates the exact velocity of her stride. It measures the angle of her joints, the trajectory of the cab, and the distance between them.

Computer Vision is a real-time translator.

It takes the messy, chaotic, physical friction of the real world: gravity, speed, depth, and human movement, and instantly converts it all into a live searchable digital spreadsheet.

MAKING THE REAL WORLD "TRACKABLE"

Up until now the digital world and the physical world were separate. If you wanted to interact with the digital world, you had to stop what you were doing, pull out a screen, and click a button. That friction slows down human progress.

Computer Vision deletes the friction. It makes the physical world "trackable."

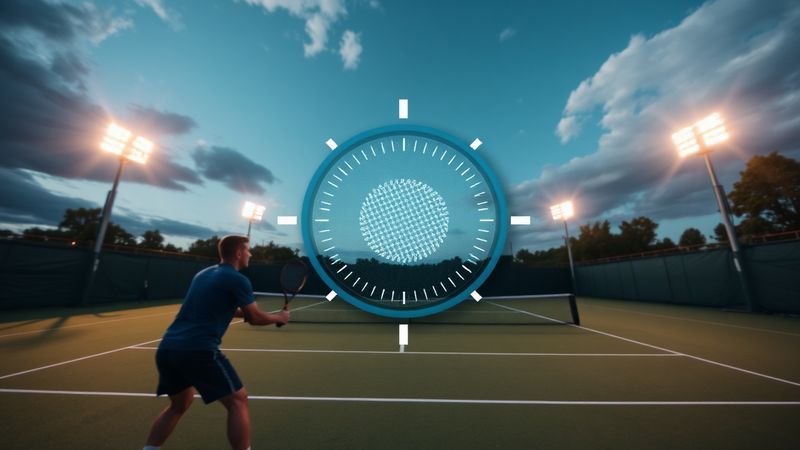

Think about a Tesla. It doesn’t drive itself by looking at a map. It uses an array of cameras running Computer Vision to instantly translate a chaotic intersection of pedestrians, stray dogs, and red lights into a mathematical video game, allowing a 4,000-pound steel machine to navigate it safely.

Now consider Amazon Go stores.

You walk in, pick up a sandwich, and walk out.

- There are no cashiers.

- There are no barcode scanners.

The cameras in the ceiling simply track your 3D skeleton, see your hand grab the item, and automatically deduct the money from your account. Your physical movement is the transaction. Computer Vision is the bridge. It allows computers to finally understand, navigate, and interact with the physical space we live in.

THE OPEN-SOURCE LEAK (Why You Hold the Power)

When you hear this, it is easy to feel small. It sounds like a billion-dollar technology reserved for mega-corporations building a sci-fi future. But here is the greatest architectural triumph of our time: The monopoly is already broken.

Ten years ago, running a Computer Vision algorithm required a $100,000 military-grade camera setup and a warehouse full of supercomputers. Today, that exact same technology is sitting in your pocket.

Because computer chips get exponentially smaller, faster, and cheaper, the Neural Processing Unit (NPU) inside a standard modern smartphone is now powerful enough to run these exact same 3D-tracking algorithms locally. You don't need a server farm. You don't even need an internet connection.

The billion-dollar code leaked to the public, and it is entirely open-source.

This is why we build. We don't have to be passive consumers in a world mapped by corporate cameras. We can take this exact same technology—the ultimate tool for measuring and understanding physical reality and use it for ourselves. We can build apps that analyze our posture to heal back pain. We can track our own biomechanics to perfect our athletic potential. We can automate our own small businesses.

The camera is no longer just a lens.

It is the ultimate tool for decoding reality. The code is in your hands.

Now, let's see what you can build with it.